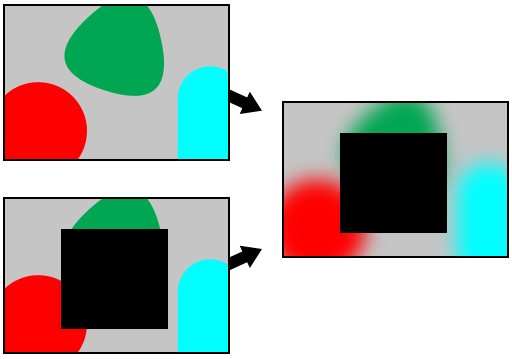

根据两个图像之间的差异创建蒙版 (iPhone)

如何检测两个图像之间的差异,创建不同区域的掩模,以便处理两个图像共有的区域(例如高斯模糊)?

编辑: 我目前正在使用此代码来获取像素的 RGBA 值

+ (NSArray*)getRGBAsFromImage:(UIImage*)image atX:(int)xx andY:(int)yy count:(int)count

{

NSMutableArray *result = [NSMutableArray arrayWithCapacity:count];

// First get the image into your data buffer

CGImageRef imageRef = [image CGImage];

NSUInteger width = CGImageGetWidth(imageRef);

NSUInteger height = CGImageGetHeight(imageRef);

CGColorSpaceRef colorSpace = CGColorSpaceCreateDeviceRGB();

unsigned char *rawData = malloc(height * width * 4);

NSUInteger bytesPerPixel = 4;

NSUInteger bytesPerRow = bytesPerPixel * width;

NSUInteger bitsPerComponent = 8;

CGContextRef context = CGBitmapContextCreate(rawData, width, height,

bitsPerComponent, bytesPerRow, colorSpace,

kCGImageAlphaPremultipliedLast | kCGBitmapByteOrder32Big);

CGColorSpaceRelease(colorSpace);

CGContextDrawImage(context, CGRectMake(0, 0, width, height), imageRef);

CGContextRelease(context);

// Now your rawData contains the image data in the RGBA8888 pixel format.

int byteIndex = (bytesPerRow * yy) + xx * bytesPerPixel;

for (int ii = 0 ; ii < count ; ++ii)

{

CGFloat red = (rawData[byteIndex] * 1.0) / 255.0;

CGFloat green = (rawData[byteIndex + 1] * 1.0) / 255.0;

CGFloat blue = (rawData[byteIndex + 2] * 1.0) / 255.0;

CGFloat alpha = (rawData[byteIndex + 3] * 1.0) / 255.0;

byteIndex += 4;

UIColor *acolor = [UIColor colorWithRed:red green:green blue:blue alpha:alpha];

[result addObject:acolor];

}

free(rawData);

return result;

}

:问题是,图像是从 iPhone 的摄像头捕获的,因此它们的位置不完全相同。我需要创建几个像素的区域并提取该区域的一般颜色(也许通过将 RGBA 值相加并除以像素数?)。我怎样才能做到这一点,然后将其转换为 CGMask?

我知道这是一个复杂的问题,因此我们将不胜感激。

谢谢。

How can I detect the difference between 2 images, creating a mask of the area that's different in order to process the area that's common to both images (gaussian blur for example)?

EDIT: I'm currently using this code to get the RGBA value of pixels:

+ (NSArray*)getRGBAsFromImage:(UIImage*)image atX:(int)xx andY:(int)yy count:(int)count

{

NSMutableArray *result = [NSMutableArray arrayWithCapacity:count];

// First get the image into your data buffer

CGImageRef imageRef = [image CGImage];

NSUInteger width = CGImageGetWidth(imageRef);

NSUInteger height = CGImageGetHeight(imageRef);

CGColorSpaceRef colorSpace = CGColorSpaceCreateDeviceRGB();

unsigned char *rawData = malloc(height * width * 4);

NSUInteger bytesPerPixel = 4;

NSUInteger bytesPerRow = bytesPerPixel * width;

NSUInteger bitsPerComponent = 8;

CGContextRef context = CGBitmapContextCreate(rawData, width, height,

bitsPerComponent, bytesPerRow, colorSpace,

kCGImageAlphaPremultipliedLast | kCGBitmapByteOrder32Big);

CGColorSpaceRelease(colorSpace);

CGContextDrawImage(context, CGRectMake(0, 0, width, height), imageRef);

CGContextRelease(context);

// Now your rawData contains the image data in the RGBA8888 pixel format.

int byteIndex = (bytesPerRow * yy) + xx * bytesPerPixel;

for (int ii = 0 ; ii < count ; ++ii)

{

CGFloat red = (rawData[byteIndex] * 1.0) / 255.0;

CGFloat green = (rawData[byteIndex + 1] * 1.0) / 255.0;

CGFloat blue = (rawData[byteIndex + 2] * 1.0) / 255.0;

CGFloat alpha = (rawData[byteIndex + 3] * 1.0) / 255.0;

byteIndex += 4;

UIColor *acolor = [UIColor colorWithRed:red green:green blue:blue alpha:alpha];

[result addObject:acolor];

}

free(rawData);

return result;

}

The problem is, the images are being captured from the iPhone's camera so they are not exactly the same position. I need to create areas of a couple of pixels and extracting the general color of the area (maybe by adding up the RGBA values and dividing by the number of pixels?). How could I do this and then translate it to a CGMask?

I know this is a complex question, so any help is appreciated.

Thanks.

如果你对这篇内容有疑问,欢迎到本站社区发帖提问 参与讨论,获取更多帮助,或者扫码二维码加入 Web 技术交流群。

绑定邮箱获取回复消息

由于您还没有绑定你的真实邮箱,如果其他用户或者作者回复了您的评论,将不能在第一时间通知您!

发布评论

评论(5)

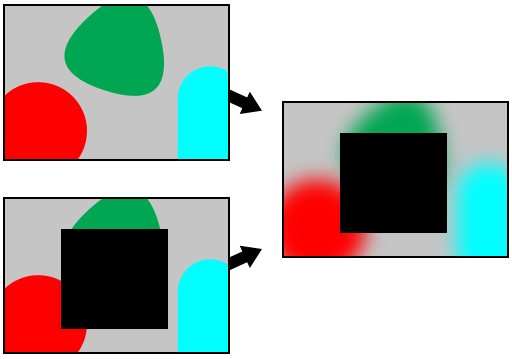

我认为最简单的方法是使用差异混合模式。以下代码基于我在 CKImageAdditions 中使用的代码。

I think the simplest way to do this would be to use a difference blend mode. The following code is based on code I use in CKImageAdditions.

一张 iPhone 照片与下一张照片之间的像素变化有以下三个原因:拍摄对象发生变化、iPhone 移动以及随机噪点。我假设对于这个问题,您对主题更改最感兴趣,并且希望处理其他两个更改的影响。我还假设该应用程序希望用户将 iPhone 保持在合理的静止状态,因此 iPhone 的移动变化不如主体变化那么重要。

为了减少随机噪声的影响,只需稍微模糊图像即可。简单的平均模糊(生成图像中的每个像素是原始像素与其最近像素的平均值)应该足以消除光线充足的 iPhone 图像中的任何噪点。

为了解决 iPhone 移动的问题,您可以在每个图像上运行特征检测算法(首先在 Wikipedia 上查找特征检测)。然后计算对齐变化最小的检测到的特征所需的变换。

将该变换应用于模糊图像,并找出图像之间的差异。任何具有足够差异的像素都将成为您的蒙版。然后,您可以处理蒙版以消除任何已更改像素的岛。例如,对象可能穿着纯色衬衫。拍摄对象可能会从一个图像移动到下一个图像,但纯色衬衫的区域可能会重叠,从而导致蒙版中间有一个洞。

换句话说,这是一个重大且困难的图像处理问题。您不会在 stackoverflow.com 的帖子中找到答案。您可以在数字图像处理教科书中找到答案。

There are three reasons pixels will change from one iPhone photo to the next, the subject changed, the iPhone moved, and random noise. I assume for this question, you're most interested in the subject changes, and you want to process out the effects of the other two changes. I also assume the app intends the user to keep the iPhone reasonably still, so iPhone movement changes are less significant than subject changes.

To reduce the effects of random noise, just blur the image a little. A simple averaging blur, where each pixel in the resulting image is an average of the original pixel with its nearest neighbors should be sufficient to smooth out any noise in a reasonably well lit iPhone image.

To address iPhone movement, you can run a feature detection algorithm on each image (look up feature detection on Wikipedia for a start). Then calculate the transforms needed to align the least changed detected features.

Apply that transform to the blurred images, and find the difference between the images. Any pixels with a sufficient difference will become your mask. You can then process the mask to eliminate any islands of changed pixels. For example, a subject may be wearing a solid colored shirt. The subject may move from one image to the next, but the area of the solid colored shirt may overlap resulting in a mask with a hole in the middle.

In other words, this is a significant and difficult image processing problem. You won't find the answer in a stackoverflow.com post. You will find the answer in a digital image processing textbook.

难道你不能只从图像中减去像素值,然后处理差值 i 为 0 的像素吗?

Can't you just subtract pixel values from the images, and process pixels where the difference i 0?

在一定半径内的其他图像中不具有适当相似像素的每个像素可以被视为掩模的一部分。它很慢(虽然没有什么比它更快的了),但它的工作原理相当简单。

Every pixel which does not have a suitably similar pixel in the other image within a certain radius can be deemed to be part of the mask. It's slow, (though there's not much that would be faster) but it works fairly simply.

遍历像素,将下图中不同的像素复制到新像素(不是不透明的)。

完全模糊上面的一张,然后显示上面的新一张。

Go through the pixels, copy the ones that are different in the lower image to a new one (not opaque).

Blur the upper one completely, then show the new one above.